Born from the need to overcome the limitations of traditional neural networks, LSTMs have become the backbone of many modern AI applications—from voice assistants to stock market prediction.

An LSTM cell is more complex than a traditional neuron. It contains three main gates:

These gates are controlled by sigmoid and tanh activation functions, which help regulate the flow of information.

Imagine a conveyor belt (the cell state) running through a factory. The gates are like workers who decide what to keep, what to throw away, and what to send to the next station.

Traditional RNNs are notoriously bad at remembering information from earlier in a sequence. For example, in a long sentence, an RNN might forget the subject by the time it reaches the verb. LSTMs solve this by maintaining a memory cell that can carry relevant information across many time steps.

This makes LSTMs ideal for tasks like:

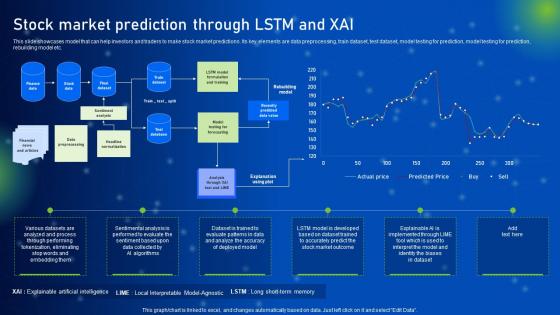

Let’s say you want to predict the price of a stock based on its past performance. An LSTM can be trained on historical price data, learning patterns over time—like seasonal trends or reactions to market events. Once trained, it can forecast future prices with a degree of accuracy that outperforms simpler models.

# Pseudocode for LSTM-based stock prediction

model = Sequential()

model.add(LSTM(50, returnsequences=True, inputshape=(timesteps, features)))

model.add(LSTM(50))

model.add(Dense(1))

model.compile(optimizer=’adam’, loss=’meansquarederror’)

model.fit(Xtrain, ytrain, epochs=20, batch_size=32)

Despite their power, LSTMs are not without flaws. They are computationally expensive, and training them can be slow. Moreover, they can still struggle with very long sequences.

This has led to the rise of newer architectures like Transformers, which use attention mechanisms to model long-range dependencies more efficiently. Yet, LSTMs remain a staple in many applications, especially where data is limited or where real-time processing is crucial.

LSTMs represent a pivotal moment in the history of deep learning. By enabling machines to remember and process sequences more effectively, they opened the door to a new era of AI applications. While newer models may be taking the spotlight, LSTMs continue to be a reliable workhorse in the AI toolbox.

Would you like a visual diagram of an LSTM cell or a code walkthrough for a specific application like sentiment analysis or time series forecasting?